Factor Two Ryzen Java Performance Gain

Standard benchmarks you can find on the internet are of limited value when you use your machine

for serious computations based on your own code. My machines often run for several days utilizing

all available CPU cores, so performance is a serious issue, as is power consumption considering the

insane power price we pay here in Germany.

After AMD released the Ryzen processor I was quite disappointed by their multi threaded

performance at my application. Recently I found out what was the source of the problem and that

it can be solved. I want to share this information in case someone faces a similar issue.

Finally it seems Ryzen Threadripper 1950x, if configured and used correctly, can handle an incredible

amount of workload. It turned out, that for multi threaded Java applications heavily using JNI there

are huge improvements using Java 9 or Java 10 compared to Java 8 I haven't found documented

anywhere yet. These improvements are already significant for Intel CPUs, but quite dramatic for

AMD Ryzen.

Space Flight Trajectory Optimization

Since the movie "The Martian" space flight optimization got introduced to a wider audience

There is a world wide yearly competition around this topic called GTOC

https://sophia.estec.esa.int/gtoc_portal where I am participating since 2010.

Optimizing trajectories requires a lot of CPU resources. How much is dependent on:

a) CPU

b) Operating System

c) Efficiency of the used algorithms

d) JDK/Java/JVM version - my implementation is in Java + C++ called via JNI.

In order to be competitive I always try to improve on all four fronts.

Used Hardware / Software

As operating system I use Linux Mint 18.2 (based on Ubuntu 16.04). The base optimization algorithm I

use is called "CMA Evolution Strategy" https://arxiv.org/pdf/1604.00772.pdf , which scales very well

with the number of CPU cores and outperforms the popular particle swarm optimization algorithm

(PSO) by a reasonable margin http://homepage.usask.ca/~nak158/docs/cmaes2015.pdf .

My two Threadrippers 1950x are used in Numa/local memory mode

restricting the number of threads to 16 for each optimization run. This way, executing two optimizations

in parallel - each using 16 threads in "NUMA" mode - I got the CPU fully utilized, which was not

possible using the default "UMA" mode. In "NUMA" mode the 1950x is more or less equivalent to

two Ryzen 8-cores, but with much less combined power consumption.

As CMA-ES implementation a C++ variant of my Apache Commons Math contribution of the CMA-ES

algorithm https://github.com/apache/commons-math/blob/master/src/main/java/org/apache/commons/math4/optim/nonlinear/scalar/noderiv/CMAESOptimizer.javais used, which additionally supports mirroring and multi threading. This C++ code is called from Java

using JNI.

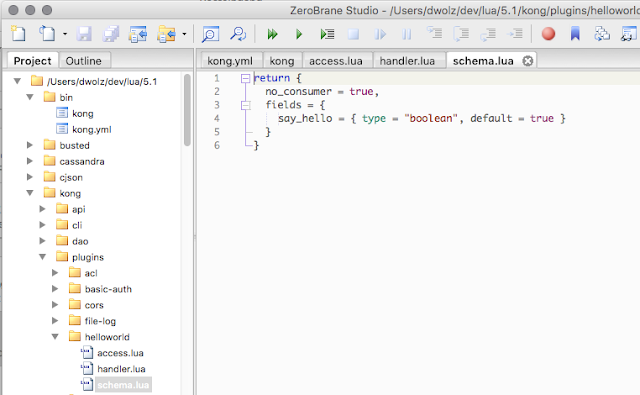

Flying to 2016 HO3

To reproduce the performance issue with Java 8 I used two real world application scenarios related to

the search for a trajectory from earth to asteroid (469219) 2016 HO3, the smallest and closest Earth

quasi-satellite - see https://arxiv.org/pdf/1608.01518.pdf .

The trajectory is computed in two phases:

1) Monte Carlo search to find the optimal start / landing time

2) Optimization of the final transfer using a low thrust (ion thruster) flight model.

Both are quite typical space trajectory design / optimization tasks.

Here is a computed transfer (black) from earth (blue) to 2016 HO3 (red):

Java performance evaluations are tricky, you need a ramp up phase executing the code without

measuring to wait for the JVM to optimize / compile the code which it does dynamically after evaluating

internal performance metrics. Then we perform 20 runs and report both the mean value and the

standard deviation of these 20 timings.

For Java 8 we used “Java HotSpot(TM) 64-Bit Server VM (build 25.171-b11, mixed mode)”,

for “Java 9 "OpenJDK 64-Bit Server VM (Zulu build 9.0.4.1+11, mixed mode)”,

for Java 10 “Java HotSpot(TM) 64-Bit Server VM 18.3 (build 10.0.1+10, mixed mode)”.

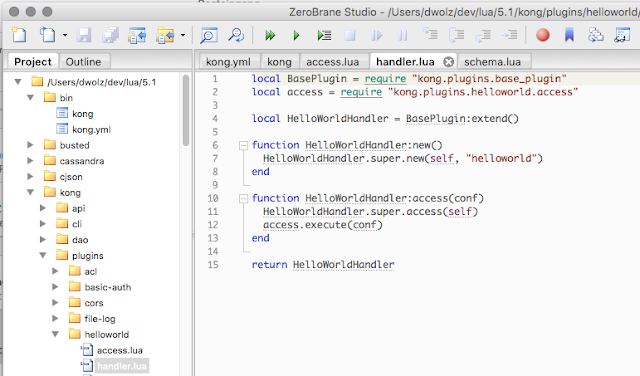

Monte Carlo Search Benchmark

For the Monte Carlo search we run 1000 CMA-ES optimizations each evaluating 5.000 times a

5-segment lambert arc flight path approximation. On Ryzen 16 CMA-ES optimizations are executed

in parallel, where each CMA-ES optimization is single threaded. Since I don't have a new Intel

machine I used an older 6-core i7-6800K CPU @ 4.0GHz for comparison, where 12 optimizations

are executed in parallel. Using Java 8 the old i7-6800K easily outperforms the Ryzen @ 3.9Ghz.

Results for i7-6800K CPU @ 4.0GHz

20 runs

|

mean

|

sdev

|

min

|

max

|

Java 8

|

10462 ms

|

64 ms

|

10359 ms

|

10595 ms

|

Java 9

|

8137 ms

|

152 ms

|

8024 ms

|

8558 ms

|

Java 9 2nd parallel exec

|

16705 ms

|

314 ms

|

16298 ms

|

17500 ms

|

On the Intel processor we see already a hefty 25% performance gain using Java 9.

Results for AMD Ryzen Threadripper 1950X @ 3.9GHz

20 runs

|

mean

|

sdev

|

min

|

max

|

Java 8

|

12326 ms

|

251 ms

|

11717 ms

|

12839 ms

|

Java 9

|

5725 ms

|

360 ms

|

5127 ms

|

6622 ms

|

Java 9 2nd parallel exec

|

5874 ms

|

389 ms

|

5421 ms

|

6970 ms

|

Java 10

|

5906 ms

|

264 ms

|

5496 ms

|

6543 ms

|

These results show a serious problem for the Ryzen/Java 8 combination. Only using Java 9 or Java 10

the Ryzen can outperform the old Intel 6800k, it is more than twice as fast than with Java 8.

CMA-ES Final Optimization Benchmark

For the final transfer optimization we execute a single multi threaded CMA-ES optimization evaluating

200.000 times an 8-segment low thrust flight model using the GraggBulirschStoerIntegrator from

Apache Commons Math. Since only the function evaluations are executed in parallel, we have much

less CPU utilization and the Java 9 advantage is less dramatic.

Results for i7-6800K CPU @ 4.0GHz

20 runs

|

mean

|

sdev

|

min

|

max

|

Java 8

|

15551 ms

|

297 ms

|

14705 ms

|

16003 ms

|

Java 9

|

14825 ms

|

666 ms

|

13123 ms

|

15757 ms

|

On the Intel processor we now see only a moderate advantage for Java 9. But regardless of the Java

version Ryzen is superior. Would be interesting to see how newer Intel processors perform here.

Results for AMD Ryzen Threadripper 1950X @ 3.9GHz

20 runs

|

mean

|

sdev

|

min

|

max

|

Java 8

|

10895 ms

|

471 ms

|

9818 ms

|

12187 ms

|

Java 9

|

9641 ms

|

180 ms

|

9313 ms

|

10017 ms

|

Java 10

|

10395 ms

|

974 ms

|

9564 ms

|

13213 ms

|

Difference between Java versions is similar to the Intel results, again Java 9 is the winner.

The Java 8 / Ryzen multithreading problem seems related to CPU utilization which is much lower in

this experiment.

We also tried a new 8-core Ryzen 2 2700x processor, which can be slightly higher clocked than the

1950x, but showed nevertheless very similar results - of course it makes no sense to execute a

second run in parallel on the 2700x since it has only 8 cores.

To illustrate that the used algorithms are competitive: https://youtu.be/7QxikroB-6Q shows a solution

for the GTOC4 competition

which is significantly better than the solution which won the competition.